Content

# Lite-MCP-Client

## 📝 Project Introduction

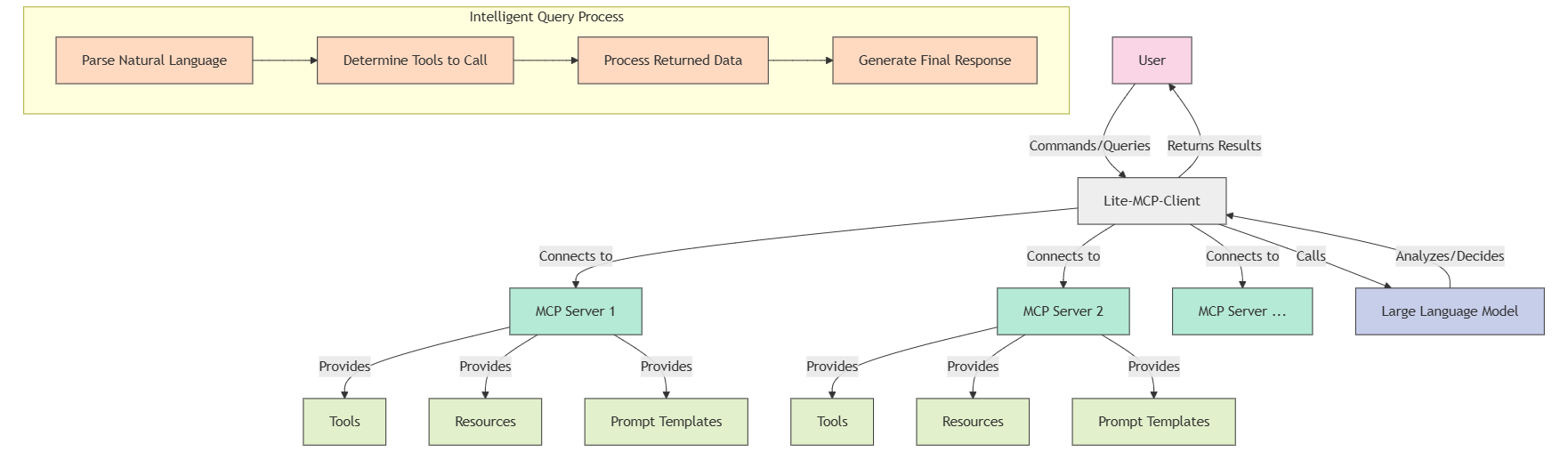

Lite-MCP-Client is a lightweight command-line MCP (Model-Chat-Prompt) client tool that can connect to various MCP servers, helping users easily invoke the tools, resources, and prompt templates provided by the servers. This client supports integration with large language models to achieve intelligent querying and processing.

## ✨ Main Features

- **Multi-server Connection Management**: Connect and manage multiple MCP servers simultaneously

- **Support for Various Server Types**: Compatible with STDIO and SSE server types

- **Tool Invocation**: Easily invoke various tools provided by the server

- **Resource Access**: Access resources provided by the server

- **Prompt Template Usage**: Use prompt templates defined by the server

- **Intelligent Querying**: Automatically determine which tools and resources to use through natural language queries

- **Flexible Interaction Modes**: Support for command-line parameters and interactive terminal interface

- **Intelligent Conversation History Management**: Maintain session context for continuous dialogue

## 🚀 Installation Guide

### Prerequisites

- Python 3.11+

- pip or uv package manager

### Installation Methods

#### Method 1: Install from PyPI

```bash

# Install using pip

pip install lite-mcp-client

# Or install using uv

uv pip install lite-mcp-client

```

#### Method 2: Install from Source Code

```bash

# Clone the repository

git clone https://github.com/sligter/lite-mcp-client

cd lite-mcp-client

# Install dependencies using uv

uv sync

```

### Configure Environment Variables

```bash

cp .env.example .env

```

## 📖 Usage Instructions

### Basic Usage

#### Command-line Tool After Installing with pip

```bash

# Start interactive mode

lite-mcp-client --interactive

# Use a specific server

lite-mcp-client --server "Server Name"

# Connect to all default servers

lite-mcp-client --connect-all

# Execute intelligent query

lite-mcp-client --query "Query trending news on Weibo and summarize"

# Or directly

lite-mcp-client "Query trending news on Weibo and summarize"

# Invoke a specific tool

lite-mcp-client --call "server_name.tool_name" --params '{"param1": "value1"}'

# Get resources

lite-mcp-client --get "server_name.resourceURI"

# Use prompt template

lite-mcp-client --prompt "server_name.prompt_name" --params '{"param1": "value1"}'

# Show results after executing an operation and keep interactive mode

lite-mcp-client --query "Get trending topics on Weibo" --interactive

```

#### Run Directly from Source Code

```bash

# Start interactive mode

uv run lite_mcp_client.main --interactive

# Or

python -m lite_mcp_client.main --interactive

# Use a specific server

uv run lite_mcp_client.main --server "Server Name"

# Connect to all default servers

uv run lite_mcp_client.main --connect-all

# Execute intelligent query

uv run lite_mcp_client.main --query "Query trending news on Weibo and summarize"

# Or directly

uv run lite_mcp_client.main "Query trending news on Weibo and summarize"

# Invoke a specific tool

uv run lite_mcp_client.main --call "server_name.tool_name" --params '{"param1": "value1"}'

# Get resources

uv run lite_mcp_client.main --get "server_name.resourceURI"

# Use prompt template

uv run lite_mcp_client.main --prompt "server_name.prompt_name" --params '{"param1": "value1"}'

# Show results after executing an operation and keep interactive mode

uv run lite_mcp_client.main --query "Get trending topics on Weibo" --interactive

```

### Advanced Usage

```bash

# Execute complex tasks, automatically selecting tools (using installed version)

lite-mcp-client "Get today's tech news, analyze AI-related content, and generate a summary report"

# Use configuration file

lite-mcp-client --config custom_config.json --query "Analyze latest data"

# Result redirection

lite-mcp-client --get "Fetch.webpage" --params '{"url": "https://example.com"}' > webpage.html

```

### Configuration File

The default configuration file is `mcp_config.json`, formatted as follows:

```json

{

"mcp_servers": [

{

"name": "Trending Topic Query",

"type": "stdio",

"command": "uvx",

"args": ["mcp-newsnow"],

"env": {},

"description": "Query trending topics"

},

{

"name": "Fetch",

"type": "stdio",

"command": "uvx",

"args": ["mcp-server-fetch"],

"env": {},

"description": "Access specified link"

},

{

"name": "Other Services",

"type": "sse",

"url": "http://localhost:3000/sse",

"headers": {},

"description": "Description of other services"

}

],

"default_server": ["Trending Topic Query", "Fetch"]

}

```

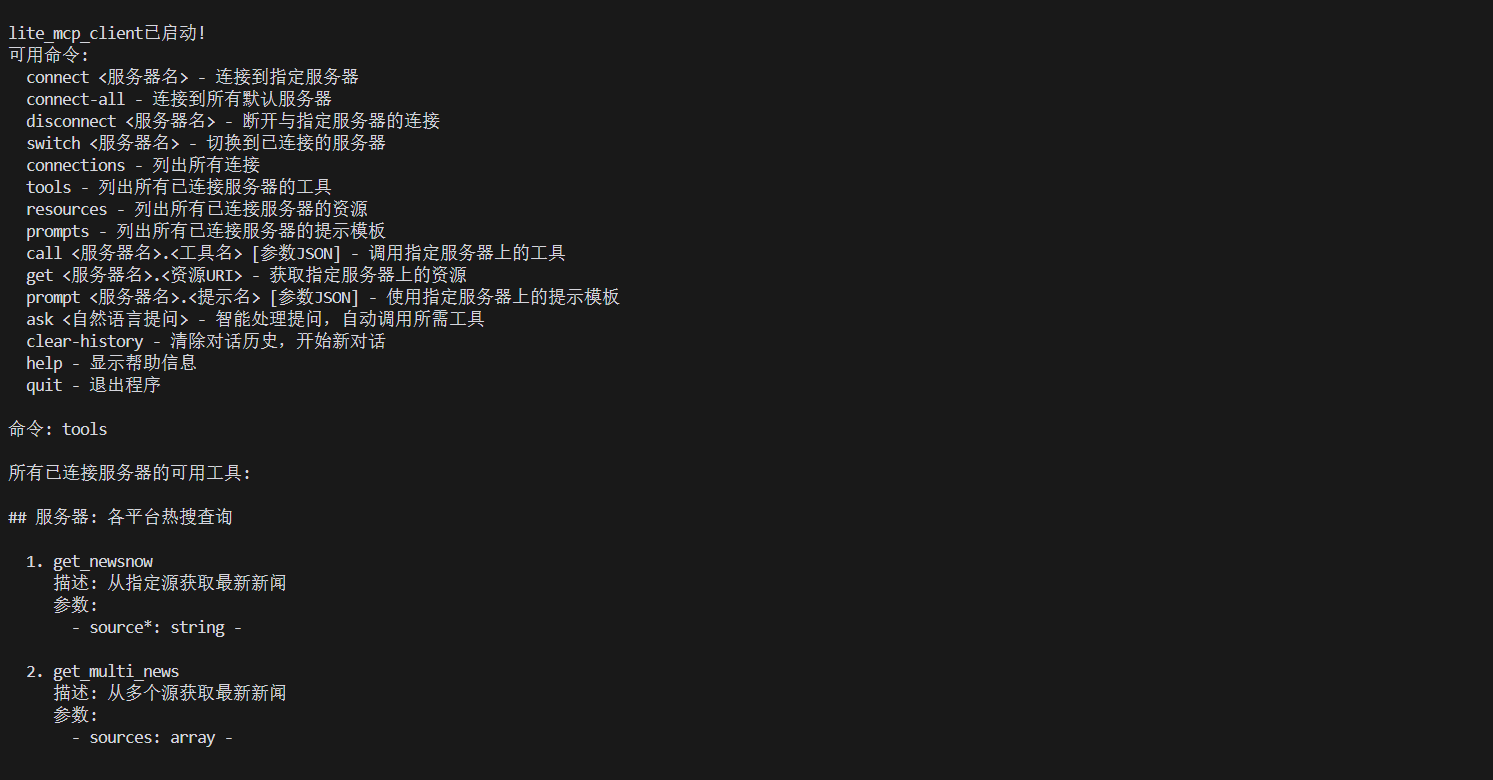

### Interactive Commands

In interactive mode, the following commands are supported:

- `connect <server_name>` - Connect to the specified server

- `connect-all` - Connect to all default servers

- `disconnect <server_name>` - Disconnect from the specified server

- `switch <server_name>` - Switch to the connected server

- `connections (conn)` - List all connections and their statuses

- `tools [server_name]` - List available tools for all or specified server

- `resources (res) [server_name]` - List available resources for all or specified server

- `prompts [server_name]` - List available prompt templates for all or specified server

- `call <srv.tool> [params]` - Invoke the tool on the specified server

- `call <tool> [params]` - Invoke the tool on the current server

- `get <srv.uri>` - Get resources from the specified server

- `get <uri>` - Get resources from the current server

- `prompt <srv.prompt> [params]` - Use the prompt template on the specified server

- `prompt <prompt> [params]` - Use the prompt template on the current server

- `ask <natural_language_question>` - LLM processes the question, automatically selecting and invoking tools

- `clear-history (clh)` - Clear the conversation history of 'ask' commands

- `help` - Display help information

- `quit / exit` - Exit the program

## 🔧 Advanced Configuration

### Server Configuration Options

| Parameter | Type | Description |

|-----------|------|-------------|

| name | string | Server name |

| type | string | Server type ("stdio" or "sse") |

| command | string | Command to start the STDIO server |

| args | list | Command line arguments |

| env | object | Environment variables |

| url | string | SSE server URL |

| headers | object | HTTP request headers |

| description | string | Server description |

## 📚 Dependencies

- `asyncio`: Asynchronous IO support

- `mcp`: MCP protocol client library

- `langchain_openai`: OpenAI model integration

- `langchain_google_genai`: Google generative AI model integration

- `langchain_anthropic`: Anthropic Claude model integration

- `langchain_aws`: AWS Bedrock model integration

- `dotenv`: Environment variable management

- `json`: JSON data processing

## 📄 License

This project is licensed under the MIT License - see the [LICENSE](LICENSE) file for details.

*Note: This client only provides an interface to MCP services; specific functionalities depend on the tools and resources provided by the connected servers.*

Connection Info

You Might Also Like

markitdown

MarkItDown-MCP is a lightweight server for converting URIs to Markdown.

servers

Model Context Protocol Servers

Time

A Model Context Protocol server for time and timezone conversions.

Filesystem

Node.js MCP Server for filesystem operations with dynamic access control.

Sequential Thinking

A structured MCP server for dynamic problem-solving and reflective thinking.

git

A Model Context Protocol server for Git automation and interaction.